Key Takeaways

AI data centers require 36 times more fiber than traditional CPU deployments, with single hyperscale campuses needing up to 8 million miles of optical fiber for GPU cluster interconnects.

G657A2 bend-insensitive fiber with 7.5mm minimum bend radius is now the standard for high-density AI data centers, allowing cables to route through tight conduits without signal loss at 400G-1.6T speeds.

IEEE 802.3dj standard (ratified mid-2026) enables 800G over 8 fibers and 1.6Tbps over 16 fibers, requiring IT teams to plan forward compatibility today when selecting trunk cables and connectors.

U.S. fiber infrastructure must nearly double from 159.6 to 372.9 million miles by 2029, creating supply chain constraints that necessitate fiber procurement planning 6-12 months ahead of deployment.

Multicore and hollow-core fiber technologies are entering commercial deployments, with hollow-core offering 30% latency reduction—critical for financial trading and real-time AI inference applications.

Third-party maintenance on fiber-connected networking equipment delivers 30-70% cost savings versus OEM support, enabling IT teams to extend hardware lifecycles beyond vendor end-of-support dates.

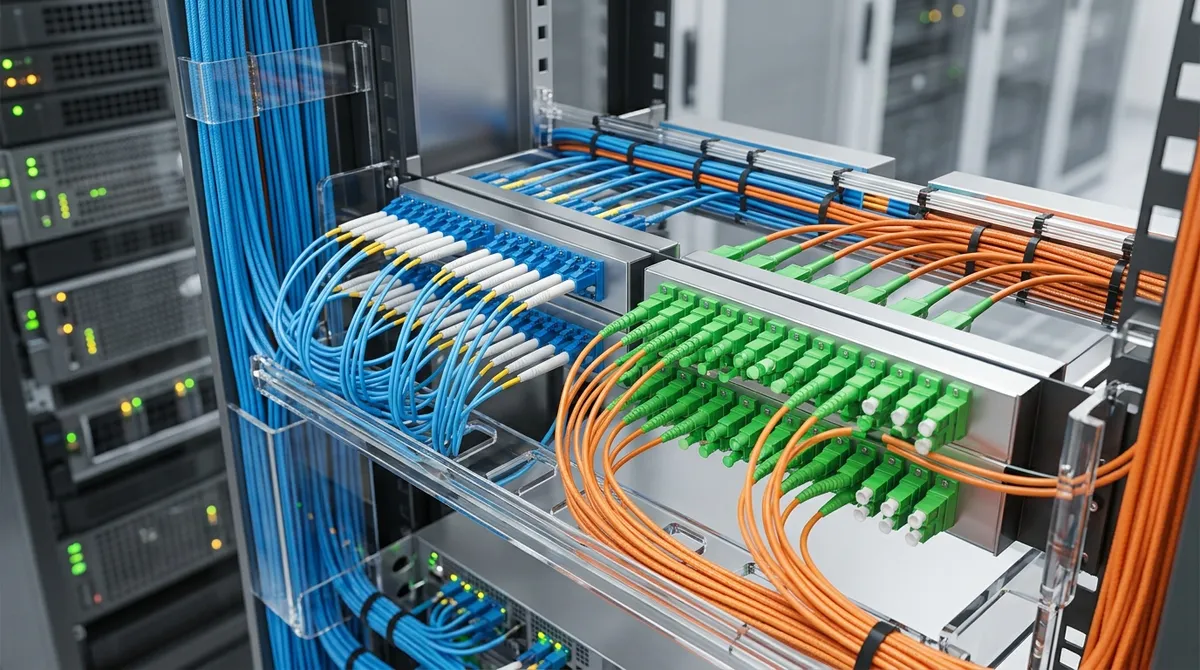

Enterprise networks and data centers are under unprecedented pressure in 2026. The explosion of AI workloads, hyperscale computing, and high-density server deployments has redefined what IT infrastructure must deliver — and fiber optic cabling sits at the center of that transformation. Unlike legacy copper-based systems, fiber optic cabling transmits data as pulses of light, enabling dramatically higher bandwidth, lower latency, and longer transmission distances without signal degradation. For enterprise IT managers, data center operators, and network infrastructure teams, understanding the capabilities and requirements of modern fiber optic cabling is no longer optional — it is foundational to building scalable, high-performance IT environments. This article explores the technical landscape of fiber optic cabling in 2026, covering key cable types, emerging standards, AI-driven demand trends, and the structured cabling architectures that keep enterprise networks operating at peak efficiency.

Understanding Fiber Optic Cabling: Core Types and Specifications

Fiber optic cabling broadly falls into two categories: single-mode fiber (SMF) and multimode fiber (MMF). Single-mode fiber uses a narrow core (~9 microns) to transmit a single light wavelength, supporting very long distances — ideal for campus backbone connections and wide-area links. Multimode fiber uses a larger core (~50 or 62.5 microns), supporting multiple light modes simultaneously and optimized for shorter intra-datacenter runs at very high speeds.

Within these categories, specific fiber standards define performance characteristics. The G657A2 bend-insensitive fiber has emerged as the gold standard for high-density data center deployments in 2026, offering a minimum bend radius of just 7.5mm. This specification allows cables to route through tight conduits and dense GPU cluster environments without introducing signal loss — a critical advantage as rack densities continue to increase.

| Fiber Type | Core Diameter | Typical Distance | Primary Application | Max Speed Support |

|---|---|---|---|---|

| Single-Mode (OS2) | 9 µm | Up to 10 km+ | Campus/WAN backbone | 400G – 1.6T |

| Multimode (OM4) | 50 µm | Up to 400m | Data center intra-rack | 100G – 400G |

| Multimode (OM5) | 50 µm | Up to 150m | Short-wave WDM links | 200G – 800G |

| G657A2 Bend-Insensitive | 9 µm | Up to 10 km+ | GPU clusters / high-density DC | 800G – 1.6T |

The AI-Driven Fiber Demand Surge in 2026

The scale of fiber optic cabling demand in 2026 is difficult to overstate. AI data centers require approximately 36 times more fiber than traditional CPU rack deployments. A single hyperscale AI campus may require up to 8 million miles of optical fiber to support the interconnects between GPU clusters, storage arrays, and networking switches. According to industry projections, AI data center fiber demand is growing at over 50% annually, driven by the transition to 800G and 1.6T speeds.

Five major hyperscalers are projected to spend between $660 billion and $690 billion on AI infrastructure in 2026 alone. To support this expansion, the total U.S. fiber miles in service must nearly double — from 159.6 million miles currently to 372.9 million miles by 2029, requiring an addition of 213.3 million fiber miles. Strategic supply agreements, such as Corning’s $6 billion fiber cable deal with Meta extending through 2030, reflect the long-term commitment vendors are making to close this supply gap. For enterprise IT teams, this macro trend directly affects procurement timelines and the availability of high-fiber-count cabling assemblies.

Key Fiber Optic Cabling Standards for Enterprise IT

Staying current with evolving IEEE standards is essential for IT procurement specialists and network infrastructure teams planning multi-year capital investments. The upcoming IEEE 802.3dj standard, expected to be ratified in mid-2026, defines two critical configurations for AI workloads:

- 800G over 8 fibers — using a per-lane rate of 100 Gb/s

- 1.6 Tbps over 16 fibers — at 200 Gb/s lane rates

These specifications will standardize the physical layer for next-generation switch-to-switch and server fabric connections in AI data centers. Network infrastructure teams should account for these lane configurations when selecting pre-terminated fiber trunk cables, MPO/MTP connectors, and patch panel systems today, ensuring forward compatibility as equipment refreshes occur.

For enterprise deployments outside of hyperscale AI environments, the Network World resource library provides detailed technical guidance on applying current cabling standards across mixed-speed enterprise LAN and data center environments.

Structured Cabling Architectures for High-Density IT Environments

Structured cabling methodology provides the organized framework that transforms individual fiber runs into a manageable, scalable infrastructure. For enterprise data centers and campus networks, a well-designed structured cabling system reduces operational complexity, simplifies troubleshooting, and supports rapid equipment changes without service disruption.

The following structured cabling hierarchy is standard for modern enterprise data center deployments utilizing fiber optic cabling:

- Main Distribution Area (MDA): Houses core switching and routing equipment; uses high-fiber-count trunk cables (288–1,728+ fiber strands) with MPO/MTP pre-terminated connectors.

- Horizontal Distribution Area (HDA): Contains aggregation/distribution switches connected to MDA via inter-distribution area (IDA) trunks; typically uses OM4/OM5 or OS2 patch panels.

- Zone Distribution Area (ZDA): Optional intermediate consolidation point for flexible, high-density server row deployments.

- Equipment Distribution Area (EDA): Top-of-rack (ToR) switches and server connections using short-run multimode or active optical cables (AOCs).

| Cabling Component | Function | Connector Type | Recommended Fiber |

|---|---|---|---|

| Trunk Cable (High-Density) | MDA-to-HDA backbone runs | MPO-12 / MPO-16 | OM4 / OS2 |

| Breakout Cable | MPO to LC/SC duplex fan-out | MPO to LC | OM4 / OM5 |

| Patch Panel | Cross-connect and management | LC / SC / MPO | OM4 / OS2 |

| Active Optical Cable (AOC) | Short intra-rack server links | QSFP / OSFP | Integrated MMF |

| Ribbon Fiber Cable | Ultra-high-density backbone | MPO-12 / MPO-24 | OS2 / OM5 |

Emerging Fiber Technologies: Multicore and Hollow-Core Fiber

Beyond conventional single-mode and multimode designs, advanced fiber technologies are beginning to enter commercial data center interconnect deployments. Two of the most significant are multicore fiber (MCF) and hollow-core fiber (HCF).

- Multicore Fiber: Encases multiple fiber cores within a single cladding structure, dramatically increasing fiber density per cable run. This reduces conduit congestion and simplifies cable management in space-constrained environments.

- Hollow-Core Fiber: Transmits light through an air-filled core rather than glass, achieving latency reductions of approximately 30% compared to conventional glass fiber — a critical advantage for latency-sensitive financial, trading, and real-time AI inference applications.

The combined market for next-generation optical fiber technologies (multicore and hollow-core) is projected to reach $1.05 billion by 2031, growing at a compound annual growth rate of 25.4%. Enterprise IT managers and data center operators planning 5–7 year infrastructure roadmaps should evaluate pilot deployments of these technologies, particularly for core interconnects and spine-layer cabling. For emerging insights on optical infrastructure planning, Technology Reseller News regularly covers vendor announcements and deployment case studies relevant to enterprise IT decision-makers.

Fiber Optic Cabling Considerations for Enterprise Procurement

IT procurement specialists and mid-sized business owners face a distinct set of challenges when sourcing fiber optic cabling components for network upgrades or new deployments. Supply chain constraints driven by AI hyperscaler demand are compressing availability windows for high-fiber-count cables and MPO/MTP assemblies. Planning procurement 6–12 months ahead of deployment timelines is increasingly necessary in 2026.

Cost optimization without compromising performance is another critical consideration. Pre-owned and refurbished networking equipment — including fiber patch panels, structured cabling enclosures, and compatible transceiver modules — can significantly reduce capital expenditure while maintaining IEEE-compliant performance specifications. Trifecta Networks specializes in providing enterprise-grade pre-owned networking hardware, including refurbished cabling infrastructure components and compatible optical transceivers, enabling IT teams to build high-performance fiber networks at reduced cost. You can also browse our current Cisco certified pre-owned inventory for compatible networking hardware that pairs with your fiber optic cabling strategy.

Key Procurement Checklist for Fiber Optic Cabling Projects

- Confirm fiber type compatibility (OS2, OM4, OM5) with existing and planned transceiver modules

- Validate MPO/MTP polarity configuration (Method A, B, or C) before ordering trunk assemblies

- Specify insertion loss and return loss requirements per TIA-568 standards for all connector terminations

- Verify bend radius specifications for routing paths — G657A2 where tight bends are unavoidable

- Assess ribbon vs. loose-tube construction based on splice vs. connector termination plans

- Confirm cable jacket ratings (OFNR, OFNP) for compliance with building code requirements

Third-Party Maintenance Support for Fiber-Dependent Infrastructure

As fiber optic cabling becomes more deeply integrated into enterprise IT architecture, the continuity of maintenance support for fiber-connected equipment grows increasingly important. Hardware refresh cycles for switches, transceivers, and optical line cards often lag behind vendor end-of-support dates, leaving network teams managing equipment beyond OEM coverage windows.

Third-party maintenance (TPM) programs provide a cost-effective alternative to OEM support contracts for fiber-connected networking equipment. For enterprise IT managers looking to extend hardware life cycles while maintaining service-level commitments, explore Tri-Net third-party maintenance services from Trifecta Networks — designed to support multi-vendor environments including switches, routers, and optical network equipment operating across fiber optic cabling infrastructures. You can also learn more about infrastructure planning best practices through resources like Tom’s Hardware, which covers enterprise networking hardware performance benchmarks and reviews.

| Support Type | Coverage | Cost Profile | Best For |

|---|---|---|---|

| OEM Support Contract | Current hardware only | High | New equipment within warranty |

| Third-Party Maintenance (TPM) | EoL and current hardware | 30–70% less than OEM | Extended hardware life cycles |

| Time & Materials | On-demand only | Variable | Low-criticality secondary systems |

Conclusion: Building a Future-Ready Fiber Infrastructure

Fiber optic cabling is not simply a connectivity medium — it is the foundational layer upon which modern enterprise IT performance, AI workload capacity, and data center scalability are built. In 2026, the technical demands placed on fiber infrastructure have intensified dramatically, driven by 800G and 1.6T speed requirements, AI GPU cluster densities demanding 36x more fiber than traditional deployments, and the emergence of advanced fiber technologies like multicore and hollow-core designs. For IT managers, data center operators, network infrastructure teams, and procurement professionals, making informed decisions about fiber type selection, structured cabling architecture, and procurement strategy is directly tied to network performance outcomes and total cost of ownership.

Whether you are planning a greenfield data center deployment, upgrading an existing campus network, or extending the life of fiber-connected hardware beyond OEM support windows, partnering with an experienced IT hardware solutions provider makes a measurable difference. Visit us on Google to see how Trifecta Networks has helped enterprise customers build reliable, cost-optimized network infrastructure. Ready to take the next step? Request a customized hardware quote from our team and get expert guidance on fiber-compatible networking equipment, refurbished hardware sourcing, and maintenance support tailored to your infrastructure requirements.

FAQs

Q: What is G657A2 bend-insensitive fiber and why is it preferred in AI data centers?

A: G657A2 is a single-mode fiber specification engineered to maintain signal integrity at a minimum bend radius of 7.5mm, compared to 15mm for standard OS2 fiber. In AI data center environments where GPU cluster cabling must route through extremely tight conduit paths and dense overhead trays, G657A2 eliminates the macrobend-induced signal loss that would otherwise degrade link performance at 400G, 800G, and 1.6T speeds.

Q: How much more fiber do AI data centers require compared to traditional CPU-based deployments?

A: A single GPU rack in an AI data center requires approximately 36 times more fiber connectivity than a traditional CPU rack, due to the high-radix interconnect topologies used in GPU cluster fabrics. At the campus level, a single hyperscale AI facility may require up to 8 million miles of optical fiber to fully support all switch-to-switch, server-to-switch, and storage interconnect paths.

Q: What speeds will the IEEE 802.3dj standard enable for fiber optic networks?

A: The IEEE 802.3dj standard, expected to be ratified in mid-2026, defines physical layer specifications for 800 Gigabit Ethernet over 8 fibers and 1.6 Terabit Ethernet over 16 fibers, both operating at 200 Gb/s per lane. These configurations are specifically designed to meet the bandwidth and latency requirements of AI training and inference workloads in hyperscale data center environments.

Q: What is the difference between MPO-12 and MPO-16 connectors in structured fiber cabling?

A: MPO-12 connectors terminate 12 fiber strands in a single rectangular ferrule, while MPO-16 connectors terminate 16 strands in the same physical form factor with a modified pin configuration. MPO-16 is increasingly preferred for 400G and 800G parallel optics applications because it enables higher fiber utilization efficiency per connector footprint, reducing the number of connectors required in high-density patch panel installations.

Q: When should enterprise IT teams consider third-party maintenance for fiber-connected networking equipment?

A: Third-party maintenance (TPM) becomes strategically advantageous when networking hardware supporting fiber optic cabling infrastructure reaches or approaches OEM end-of-support dates but remains operationally viable. TPM contracts typically deliver 30–70% cost savings versus OEM renewals and provide continued hardware replacement, remote technical support, and on-site field services — enabling IT teams to extend equipment life cycles and defer capital refresh expenditures.